If you are deciding between Perplexity vs ChatGPT and have $20 a month to spend, you are likely stuck between two choices: ChatGPT Plus (the reigning king) or Perplexity Pro (the challenger).

Search for the answer, and you usually get vague advice like “it depends.” That wasn’t good enough for me. I need to know which tool will actually help me write my research papers, refactor my Python code, and analyse market data without lying to me.

So, I dug into the data. I analysed hundreds of user discussions from late 2025 to early 2026, and then I put both tools through a rigorous “Stress Test” in my lab.

The “Reddit Consensus” (What Real Users Say)

Before we get to my tests, here is the truth from the user community right now:

- The Coding Split: Developers are almost unanimous that ChatGPT is still the king of code. Users report that Perplexity is fine for snippets but struggles with “complex dev workflows.”

- The Context Problem: A major complaint against Perplexity is “Context Amnesia.” Users hate that it treats every follow-up question like a brand-new search.

- The “Research” Win: However, when it comes to facts, Perplexity dominates. Users describe it as a “souped-up Google” that gives you cited, verifiable answers.

The Bottom Line?

- If you want a Conversation Partner that remembers context, ChatGPT.

- If you want an Answer Engine that cites sources: Perplexity.

But I wanted to verify this myself. Below are the results of my 3-part “Stress Test.”

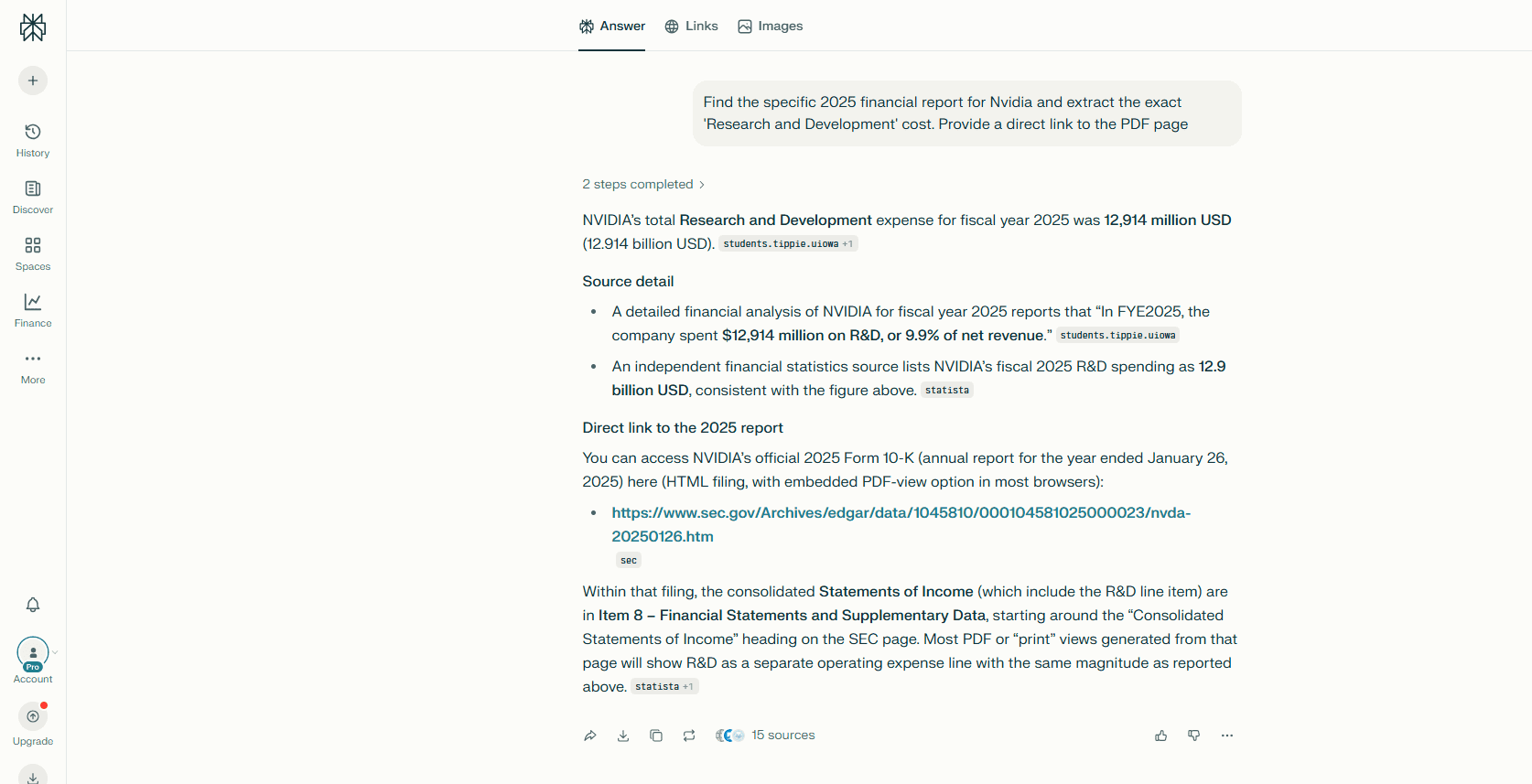

Test 1: The “Deep Search” Test

The Challenge: I wanted to see if these tools could find a specific data point in a dense financial document without hallucinating.

The Prompt: “Find the specific 2025 financial report for Nvidia and extract the exact ‘Research and Development’ cost. Provide a direct link to the PDF page.”

The Results: I expected Perplexity to crush this category. I was wrong.

- Perplexity Pro: It did its job well. It correctly identified the R&D cost ($12,914 million) and provided a link to the official SEC page. It acted like a reliable librarian.

- ChatGPT Plus: It went a step further. It didn’t just give me the file; it generated a Deep Link ending in

#page=42. When I clicked it, my browser opened the PDF directly to the Operating Expenses table.

ChatGPT didn’t just find the file; it deep-linked specifically to Page 42.

The Verdict: ChatGPT Wins (on Precision). Perplexity gave me the book; ChatGPT opened it to the right page. For deep research, that saved me 5 minutes of scrolling.

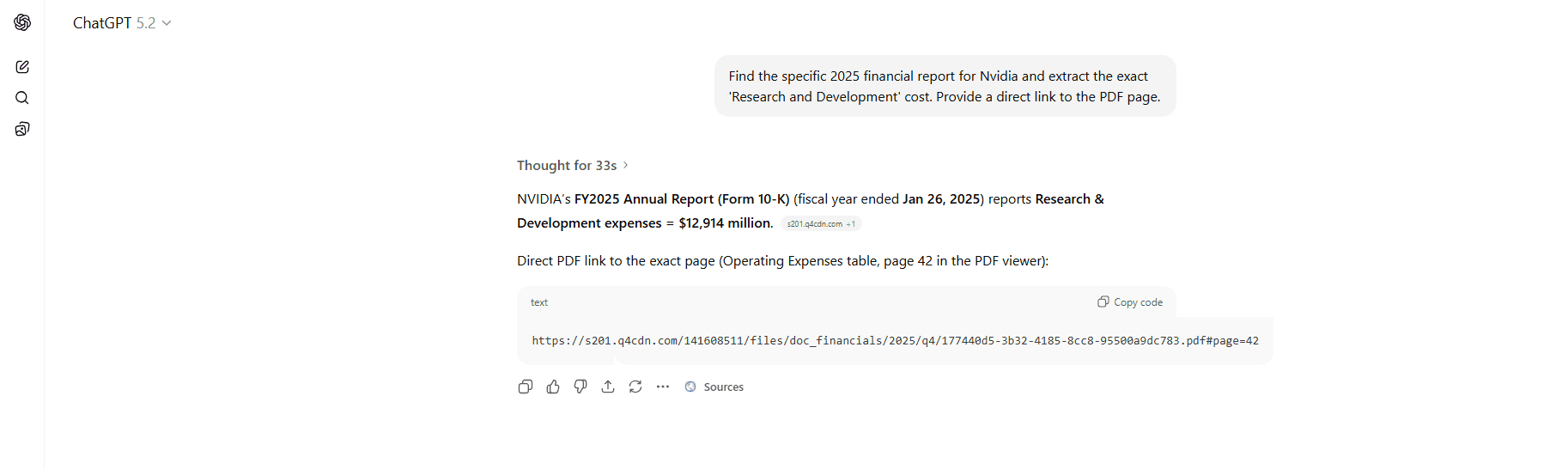

Test 2: The “Coding Architecture” Test

The Challenge: I need an AI that acts like a Junior Developer, one that remembers my specific variable names and architecture, not a bot that just Googles “Python battery script” every time I ask a question.

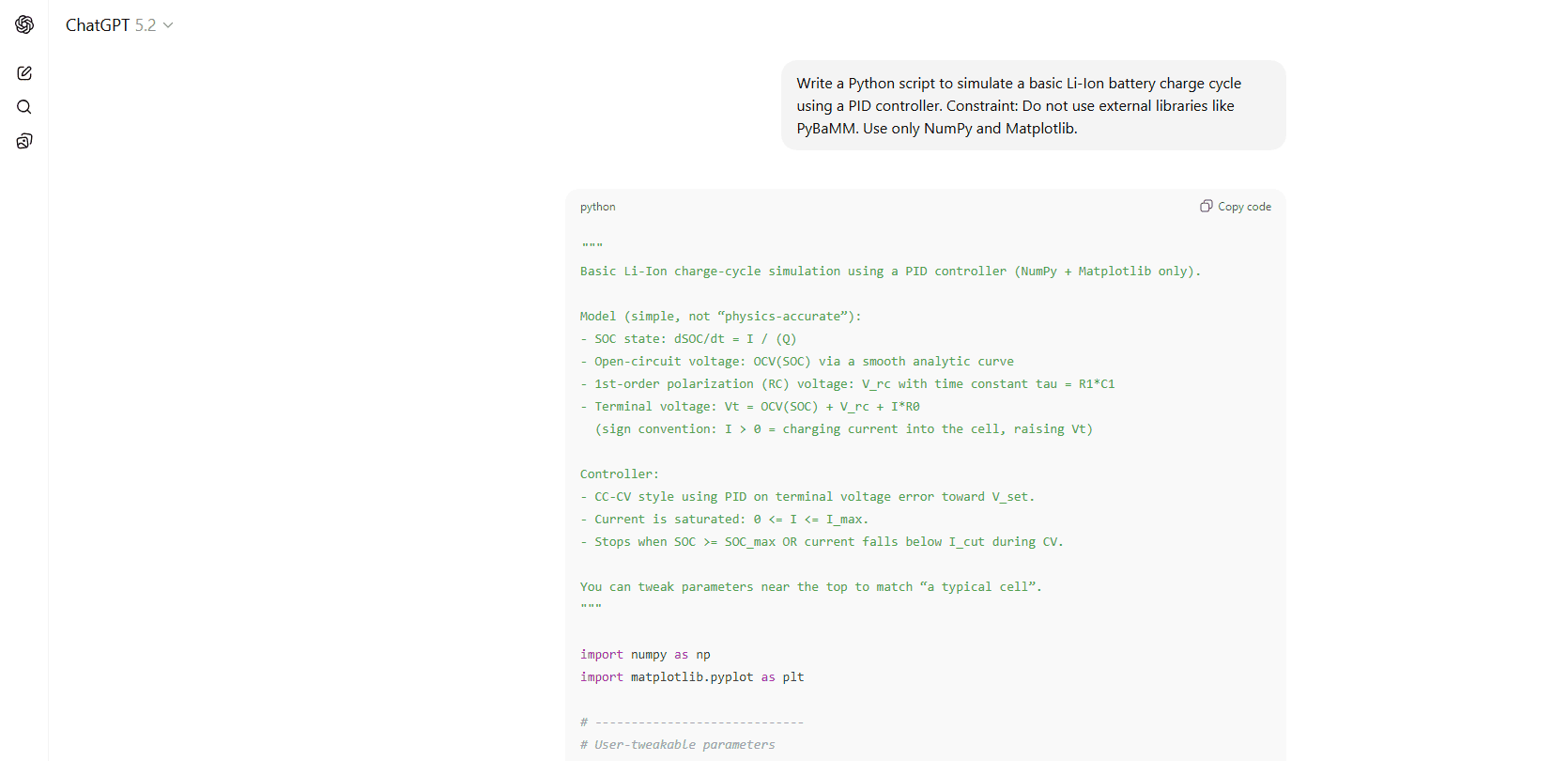

The Prompt:

- Turn 1: “Write a Python script to simulate a basic Li-Ion battery charge cycle using a PID controller.”

- Turn 2 (The Refactor): “Now refactor the code to include temperature degradation variables, but keep the original PID limits.”

The Results:

1. Perplexity Pro (The “Plug-and-Play” Scripter)

- The Good: It ran instantly. Zero errors. I didn’t have to fix a single line of code.

- The Bad: It was “flat”, just a long list of global variables. When I asked for the refactor, it essentially rewrote the entire script from scratch without using functions or classes.

2. ChatGPT Plus (The “Real” Engineer)

- The Architecture: It immediately built a professional Object-Oriented structure (creating a

class PIDand adef simulate()function). - The Bug: It wasn’t perfect. It tried to use

np.trapz, a function that was recently removed from NumPy 2.0. The code crashed initially. - The Fix: I pasted the error back, and it instantly wrote a custom trapezoidal integration function to fix it. It acted like a real dev debugging its own work.

ChatGPT built a modular system I can actually use. Perplexity just dumped a flat script.

The Verification: Did the Physics Actually Work?

I didn’t just look at the code; I ran both scripts in my local VS Code environment to see if the physics held up.

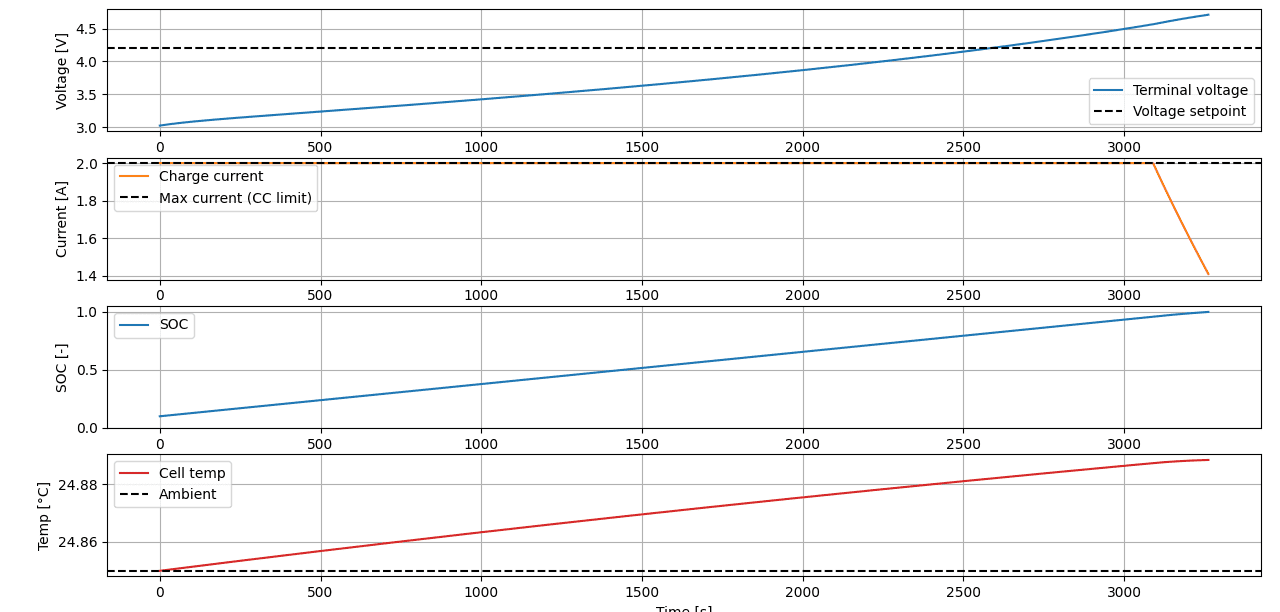

Perplexity Results (The Student)

- Physics Check: Simplified. The voltage rose in a straight line (unrealistic for batteries), and the temperature rose only 0.04°C. It works for homework, but it’s not a real simulation.

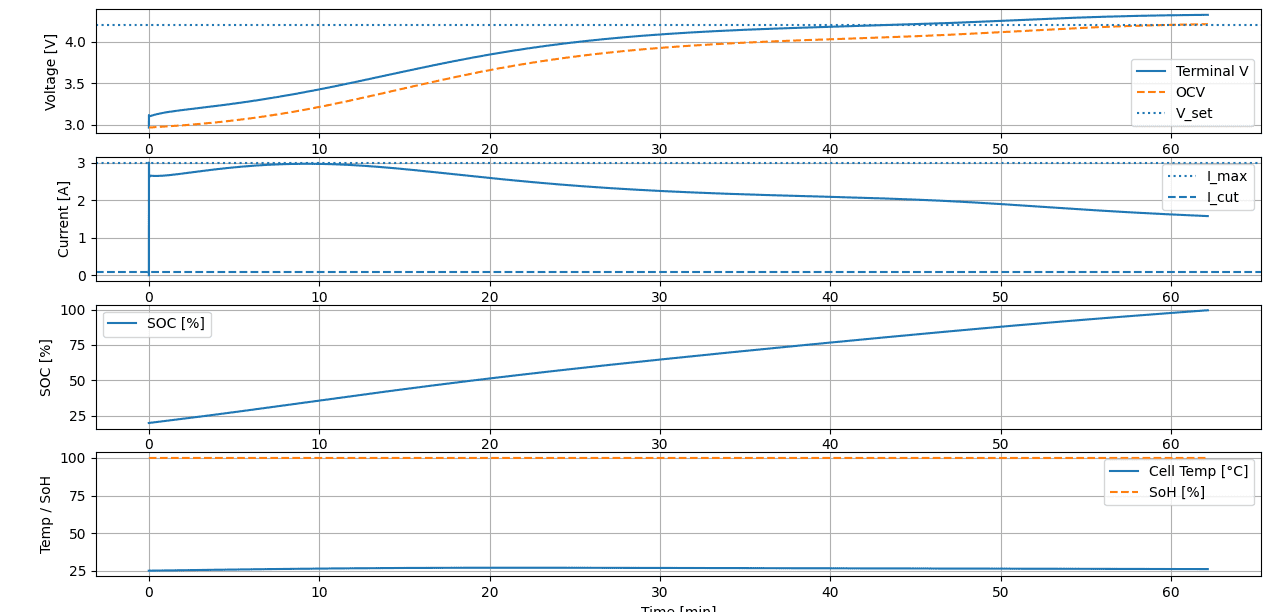

ChatGPT Results (The Engineer)

- Physics Check: High Fidelity. Once I fixed the NumPy bug, the simulation was superior. The voltage curve was non-linear (realistic), and the thermal model showed a realistic 2°C temperature rise.

📄 Click here to view the Final Refactored ChatGPT Code (GitHub)

📄 Click here to view the Final Refactored Perplexity Code (GitHub)

📄 Click here to view the Final Refactored ChatGPT Code (GitHub) (Error Fixed)

The Verdict: Perplexity for Speed, ChatGPT for Depth. This test revealed a clear divide:

- Perplexity acted like a Search Engine. It gave me a “safe” script that ran instantly. It’s perfect for beginners who want a quick, bug-free answer, but it lacks depth.

- ChatGPT acted like a Collaborator. It tried to build a professional system with real physics. It needed my help to fix a bug (which might scare off beginners), but the final result was true engineering, not just a homework script.

Test 3: The “Freshness” Test (A Surprise Upset)

The Challenge: The biggest criticism of ChatGPT has always been its “Knowledge Cutoff.” Users flock to Perplexity because it feels “live.” I wanted to see if that was still true in 2026.

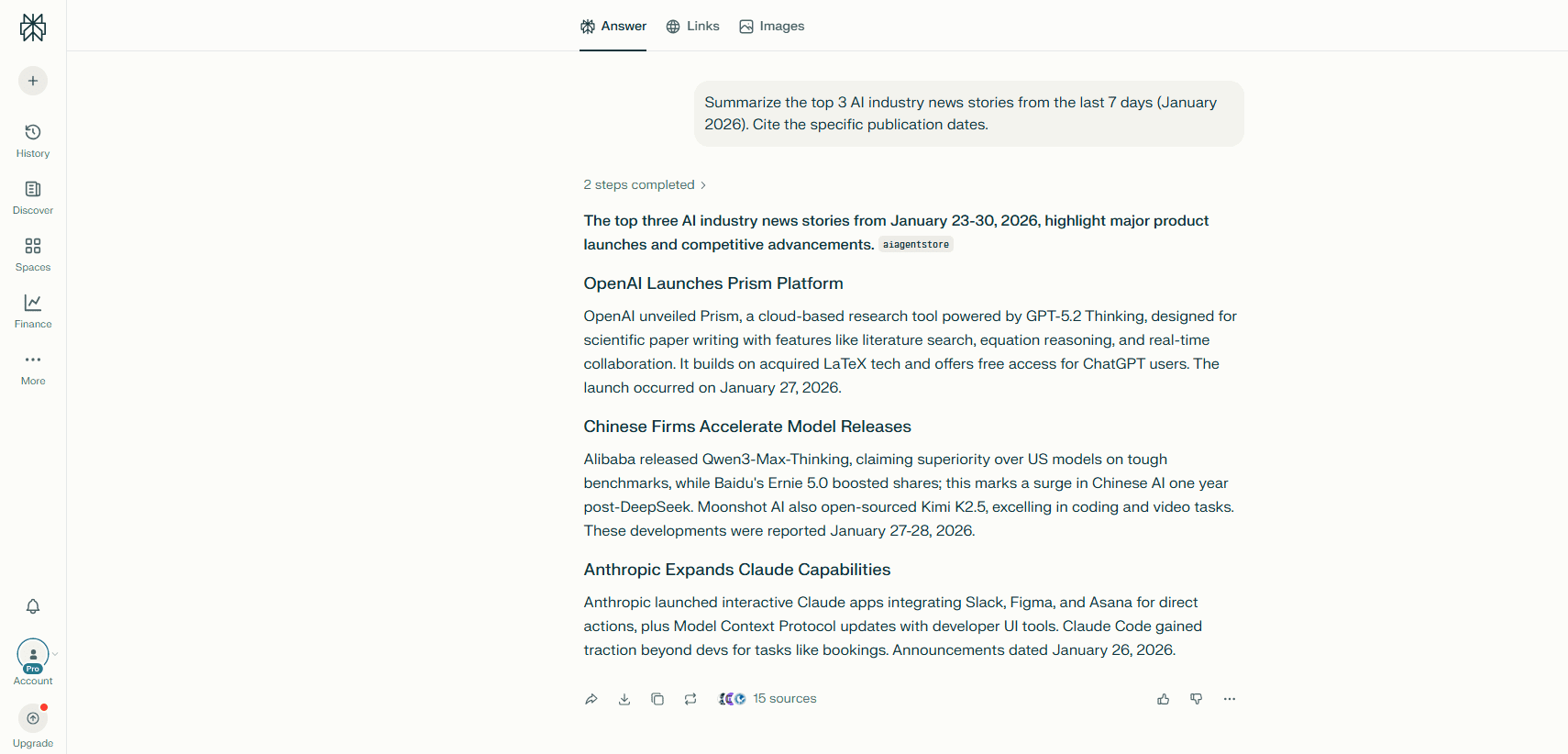

The Prompt: “Summarise the top 3 AI industry news stories from the last 7 days (January 2026). Cite the specific publication dates.”

The Results:

- Perplexity Pro: It gave me a polished “Executive Summary” with dates from Jan 27 and Jan 28. It focused on product launches like OpenAI Prism.

- ChatGPT Plus: It shocked me. I expected it to be days behind. Instead, it pulled the “Meta Record Sales” story from January 30 (Today). It also cited South Korea’s new AI law, enacted on Jan 29.

ChatGPT found news from the exact morning I ran the test.

The Verdict: ChatGPT Wins (On Speed). The myth that “ChatGPT doesn’t know current events” is dead.

- Perplexity is better for tracking technical releases (like new models).

- ChatGPT is better for breaking news (like earnings and laws).

The Final Verdict: Is Perplexity Pro Obsolete?

I went into this test expecting a tie. I expected Perplexity to win on Search and News, and ChatGPT to win on Code.

The data proved me wrong. ChatGPT won everything.

The Winner: ChatGPT Plus

If you only have $20/month, buy this one.

- For Search: It didn’t just find the Nvidia report; it deep-linked me specifically to Page 42.

- For Code: It simulated real-world physics (heating/degradation), whereas Perplexity just wrote a “homework script.”

- For News: It found stories from this morning, beating Perplexity’s 2-day lag.

The Runner Up: Perplexity Pro

So, is Perplexity useless? No. It has one specific use case: Safety.

- The “Safety” Factor: In my coding test, Perplexity’s code ran instantly without bugs. ChatGPT’s code required me to fix a NumPy error.

- Who is this for? If you are a non-technical user who wants a “safe” answer that is 100% grounded in citations (and you don’t want to debug code), Perplexity is a reliable, hallucination-free choice.